How does SoftFab help you?

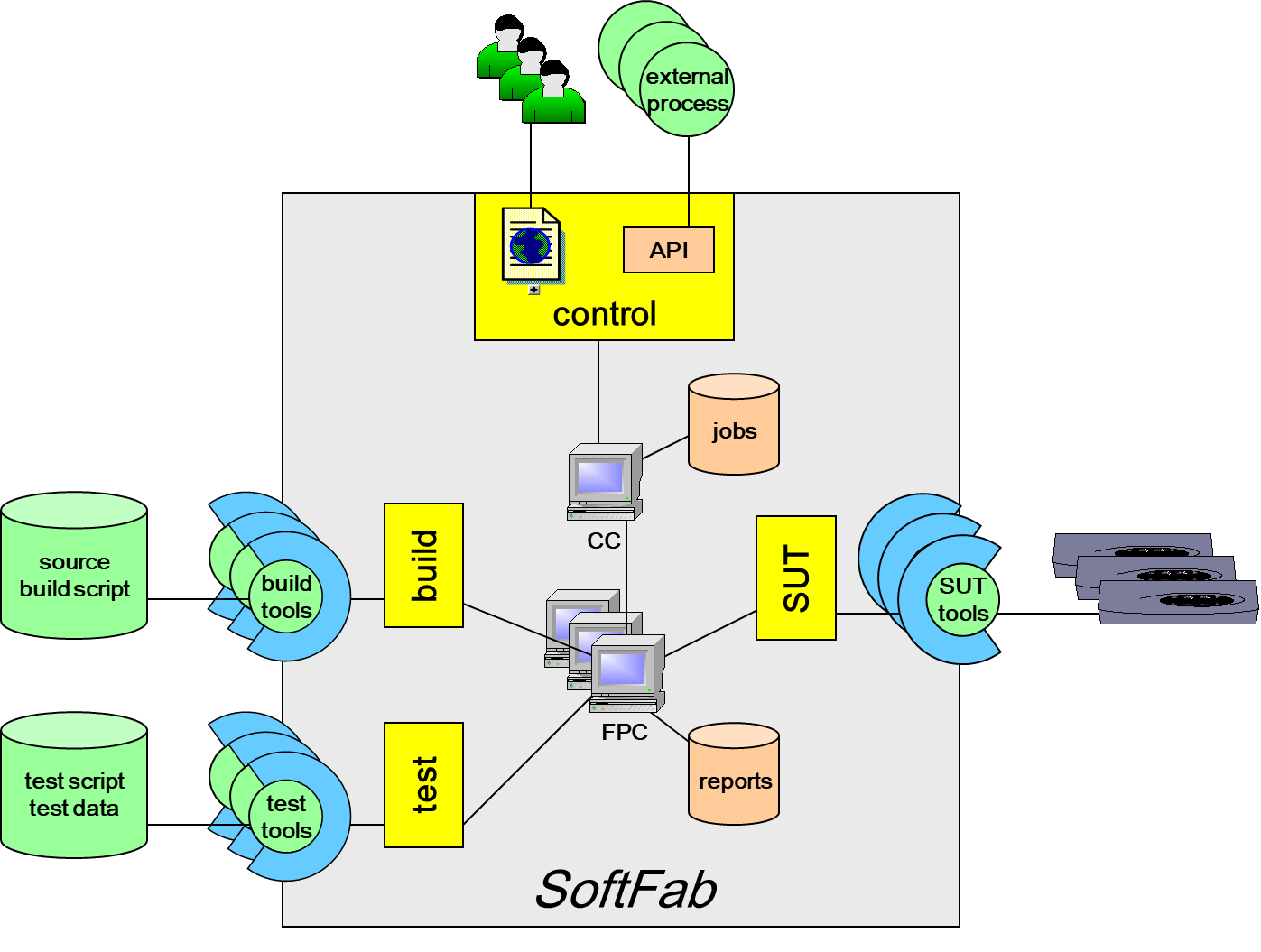

In a server-client model a SoftFab installation (the factory) consists of a Control Center (the server) that allocates tasks to be performed by one or more Task Runners (the clients) that actually run the tests using the installed test tools.

The way how these tasks are dispatched is defined by the execution graph which is an input to the Control Center.

Via the web interface, the user submits build and/or test jobs to the factory. The Control Center, implemented in Python, controls all scheduled jobs. It decides which job has to be executed at which time.

The user is able to specify exactly the System Under Test (called a SUT) and the version control baseline they wish to build, choosing from several hardware platforms and a variety of settings.

From this point on, everything is automated.

A job, that executes a pipeline, can consist of one or more tasks and the Control Center automatically allocates the tasks that make up each job to individual Task Runners. A typical job, for example, may comprise the following tasks:

- export of the right source revision from a version control system,

- build code into an image,

- upload the image,

- boot the SUT and,

- perform the test(s).

The Task Runners regularly report the status of tests being performed to the Control Center. On completion of each test, the results are fully accessible to the user via the user interface in the native format of the installed test tool.

Time-stamped logs for monitoring test-environment behaviour are produced during the execution of a task. They can be accessed at any time via the web interface. Logs are produced by the Task Runners, the actual test tools or the SUT.

Tasks are run sequentially on the Task Runners and after a task is finished, the Task Runner is immediately ready for the next assignment.

Although primarily for executing automated builds and tests, SoftFab also includes special features such as:

- a human intervention point that permits a task to be suspended at a specified point to allow intervention by an operator, after which the Task Runner continues executing the task.

- a delayed inspection moment function that flags specific points in the test where, at the end of the test, expert assessment of the results is needed before the final conclusions can be generated.